| Name | Modified | Size | Downloads / Week |

|---|---|---|---|

| Parent folder | |||

| agentgateway-darwin-arm64 | 2026-03-16 | 53.8 MB | |

| agentgateway-darwin-arm64.sha256 | 2026-03-16 | 100 Bytes | |

| agentgateway-linux-amd64 | 2026-03-16 | 58.8 MB | |

| agentgateway-linux-amd64.sha256 | 2026-03-16 | 99 Bytes | |

| agentgateway-linux-arm64 | 2026-03-16 | 53.3 MB | |

| agentgateway-linux-arm64.sha256 | 2026-03-16 | 99 Bytes | |

| agentgateway-windows-amd64.exe | 2026-03-16 | 63.4 MB | |

| agentgateway-windows-amd64.exe.sha256 | 2026-03-16 | 105 Bytes | |

| README.md | 2026-03-13 | 38.4 kB | |

| v1.0.0 source code.tar.gz | 2026-03-13 | 4.2 MB | |

| v1.0.0 source code.zip | 2026-03-13 | 5.1 MB | |

| Totals: 11 Items | 238.6 MB | 0 | |

🎉 Welcome to the 1.0.0 release of the agentgateway project!

Agentgateway is a production-ready gateway designed for cloud native environments, high scale, and native AI and Kubernetes workload support.

Agentgateway v1.0 represents a major milestone for the project, cementing its place as a production-ready foundation for managing AI agent traffic at scale. This release comes with a number of new features and improvements discussed below.

But first, I want to take a moment to look back on the project's growth. Agentgateway started exactly 1 year ago (🎂), and has already seen tremendous momentum. 2,000 stars and 1,000,000 image pulls later, Agentgateway has already had success in large scale production deployments across the globe. A special thanks to the 115 contributors who made this possible!

For more information, see the Standalone release notes and Kubernetes release notes.

Artifacts

Docker images are available:

cr.agentgateway.dev/agentgateway:v1.0.0cr.agentgateway.dev/controller:v1.0.0

Helm charts are available:

cr.agentgateway.dev/charts/agentgateway:v1.0.0cr.agentgateway.dev/charts/agentgateway-crds:v1.0.0

Binaries are available below.

Quick Start

Follow the Kubernetes or Standalone quick start guide to get started!

🔥 Breaking changes

New Kubernetes release version pattern

Previously, when running on Kubernetes, Agentgateway was deployed via Kgateway, and inherited its versioning. Because Agentgateway was independantly versioned as well, this meant there were two versions in play at once (such as v2.2 for the control plane, and v0.12 for the data plane).

This is the first release of Agentgateway that is entirely decoupled from Kgateway. There is now one shared version for the control plane, data plane, and helm charts (v1.0.0).

Please be advised that v1.0 is newer than v2.2.

Because of this, the Helm paths changed as follows:

- CRDs:

oci://cr.agentgateway.dev/charts/agentgateway-crds - Control plane:

oci://cr.agentgateway.dev/charts/agentgateway

Make sure to update any CI/CD workflows and processes to use the new Helm chart locations.

XListenerSet API promoted to ListenerSet

The experimental XListenerSet API is promoted to the standard ListenerSet API in version 1.5.0. You must install the standard channel of the Kubernetes Gateway API to get the ListenerSet API definition. If you use XListenerSet resources in your setup today, update the CRD kind from XListenerSet to ListenerSet and api version from gateway.networking.x-k8s.io/v1alpha1 to gateway.networking.k8s.io/v1 as shown in the following examples.

Old XListenerSet example:

:::yaml

apiVersion: gateway.networking.x-k8s.io/v1alpha1

kind: XListenerSet

metadata:

name: http-listenerset

namespace: httpbin

spec:

parentRef:

name: agentgateway-proxy-http

namespace: agentgateway-system

kind: Gateway

group: gateway.networking.k8s.io

listeners:

- protocol: HTTP

port: 80

name: http

allowedRoutes:

namespaces:

from: All

Updated ListenerSet example:

:::yaml

apiVersion: gateway.networking.k8s.io/v1

kind: ListenerSet

metadata:

name: http-listenerset

namespace: httpbin

spec:

parentRef:

name: agentgateway-proxy-http

namespace: agentgateway-system

kind: Gateway

group: gateway.networking.k8s.io

listeners:

- protocol: HTTP

port: 80

name: http

allowedRoutes:

namespaces:

from: All

CEL 2.0

This release includes a major refactor to the CEL implementation in agentgateway that brings substantial performance improvements and enhanced functionality. Individual CEL expressions are now 5-500x faster, which has improved end-to-end proxy performance by 50%+ in some tests. For more details on the performance improvements, see this blog post on CEL optimization.

The following user-facing changes were introduced:

- Function name changes: For compatibility with the CEL-Go implementation, the

base64Encodeandbase64Decodefunctions now use dot notation:base64.encodeandbase64.decode. The old camel case names remain in place for backwards compatibility. - New string functions: The following string manipulation functions were added to the CEL library:

startsWith,endsWith,stripPrefix, andstripSuffix. These functions align with the Google CEL-Go strings extension. - Null values fail: If a top-level variable returns a null value, the CEL expression now fails. Previously, null values always returned true. For example, the

has(jwt)expression was previously successful if the JWT was missing or could not be found. Now, this expression fails. - Logical operators: Logical

||and&&operators now handle evaluation errors gracefully instead of propagating them. For example,a || breturnstrueifais true even ifberrors. Previously, the CEL expression failed.

Make sure to update and verify any existing CEL expressions that you use in your environment.

For more information, see the CEL expression reference.

🌟 New features

Kubernetes Gateway API version 1.5.0

The Kubernetes Gateway API dependency is updated to support version 1.5.0. Gateway API 1.5 also comes with a number of new conformance tests; Agentgateway continues to be on the frontier of Gateway API support and passes all tests (standard, extended, and experimental).

This version introduces several changes, including:

- XListenerSets promoted to ListenerSets: The experimental XListenerSet API is promoted to the standard ListenerSet API in version 1.5.0. You must install the standard channel of the Kubernetes Gateway API to get the ListenerSet API definition. If you use XListenerSet resources in your setup today, update these resources to use the ListenerSet API instead.

- TLSRoute promotion: TLSRoute has been promoted from experimental to standard. If you are on the standard channel, you need to use

v1instead ofv1alpha2. The experimental channel can continue to usev1alpha2. - AllowInsecureFallback mode for mTLS listeners: If you set up mTLS listeners on your agentgateway proxy, you can now configure the proxy to establish a TLS connection, even if the client TLS certificate could not be validated successfully. For more information, see the mTLS listener docs.

- CORS wildcard support: The

allowOriginsfield now supports wildcard*origins to allow any origin. For an example, see the CORS guide.

Autoscaling policies for agentgateway controller

You can now configure Horizontal Pod Autoscaler policies for the agentgateway control plane. To set up these policies, you use the horizontalPodAutoscaler field in the Helm chart.

Review the following Helm configuration example. For more information, see Advanced install settings.

Horizontal Pod Autoscaler:

Make sure to deploy the Kubernetes metrics-server in your cluster. The metrics-server retrieves metrics, such as CPU and memory consumption for your workloads. These metrics can be used by the HPA plug-in to determine if the pod must be scaled up or down.

In the following example, you want to have 1 control plane replica running at any given time. If the CPU utilization averages 80%, you want to gradually scale up your replicas. You can have a maximum of 5 replicas at any given time.

:::yaml

horizontalPodAutoscaler:

minReplicas: 1

maxReplicas: 5

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 80

Simplified LLM configuration

A new top-level llm configuration section provides a simplified way to configure LLM providers. Instead of setting up the full binds, listeners, routes, and backends hierarchy, you can now define models directly in a flat structure. The simplified format defaults to port 4000.

The following example configures an OpenAI provider with a wildcard model match:

:::yaml

# yaml-language-server: $schema=https://agentgateway.dev/schema/config

llm:

models:

- name: "*"

provider: openAI

params:

apiKey: "$OPENAI_API_KEY"

| Setting | Description |

|---|---|

name |

The model name to match in incoming requests. When a client sends "model": "<name>", the request is routed to this provider. Use * to match any model name. |

provider |

The LLM provider to use, such as openAI, anthropic, bedrock, gemini, or vertex. |

params.model |

The model name sent to the upstream provider. If set, this overrides the model from the request. If not set, the model from the request is passed through. |

params.apiKey |

The API key for authentication. You can reference environment variables using the $VAR_NAME syntax. |

You can also define model aliases to decouple client-facing model names from provider-specific identifiers:

:::yaml

llm:

models:

- name: fast

provider: openAI

params:

model: gpt-4o-mini

apiKey: "$OPENAI_API_KEY"

- name: smart

provider: openAI

params:

model: gpt-4o

apiKey: "$OPENAI_API_KEY"

Policies such as rate limiting and authentication can be set at the llm level to apply to all models:

:::yaml

llm:

policies:

localRateLimit:

- maxTokens: 10

tokensPerFill: 1

fillInterval: 60s

type: tokens

models:

- name: "*"

provider: openAI

params:

apiKey: "$OPENAI_API_KEY"

The traditional route-based configuration (binds/listeners/routes) remains fully supported for advanced use cases that require path-based routing or custom endpoints.

For more information, see the provider setup guides such as OpenAI, Anthropic, and Bedrock.

GRPCRoute support

You can now attach GRPCRoutes to your agentgateway proxy to route traffic to gRPC endpoints. For more information, see gRPC routing.

PreRouting phase support for policies

You can now use the phase: PreRouting setting on JWT, basic auth, API key authentication, and transformation policies. This setting applies policies before a routing decision is made, which allows the policies to influence how requests are routed. Note that the policy must target a Gateway rather than an HTTPRoute.

A key use case is body-based routing for LLM requests. The following example extracts the model field from a JSON request body and sets it as a header, which can then be used for routing decisions:

:::yaml

apiVersion: agentgateway.dev/v1alpha1

kind: AgentgatewayPolicy

metadata:

name: body-based-routing

spec:

targetRefs:

- kind: Gateway

name: my-gateway

group: gateway.networking.k8s.io

traffic:

phase: PreRouting

transformation:

request:

set:

- name: X-Gateway-Model-Name

value: 'json(request.body).model'

This allows you to route requests to different backends based on the model name specified in the request body. For example, you could route GPT-4 requests to one backend and Claude requests to another:

:::yaml

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: route-by-model

spec:

parentRefs:

- name: my-gateway

rules:

- matches:

- headers:

- name: X-Gateway-Model-Name

value: gpt-4

backendRefs:

- name: openai-backend

- matches:

- headers:

- name: X-Gateway-Model-Name

value: claude-3

backendRefs:

- name: anthropic-backend

For more details on this pattern, see the body-based routing blog post.

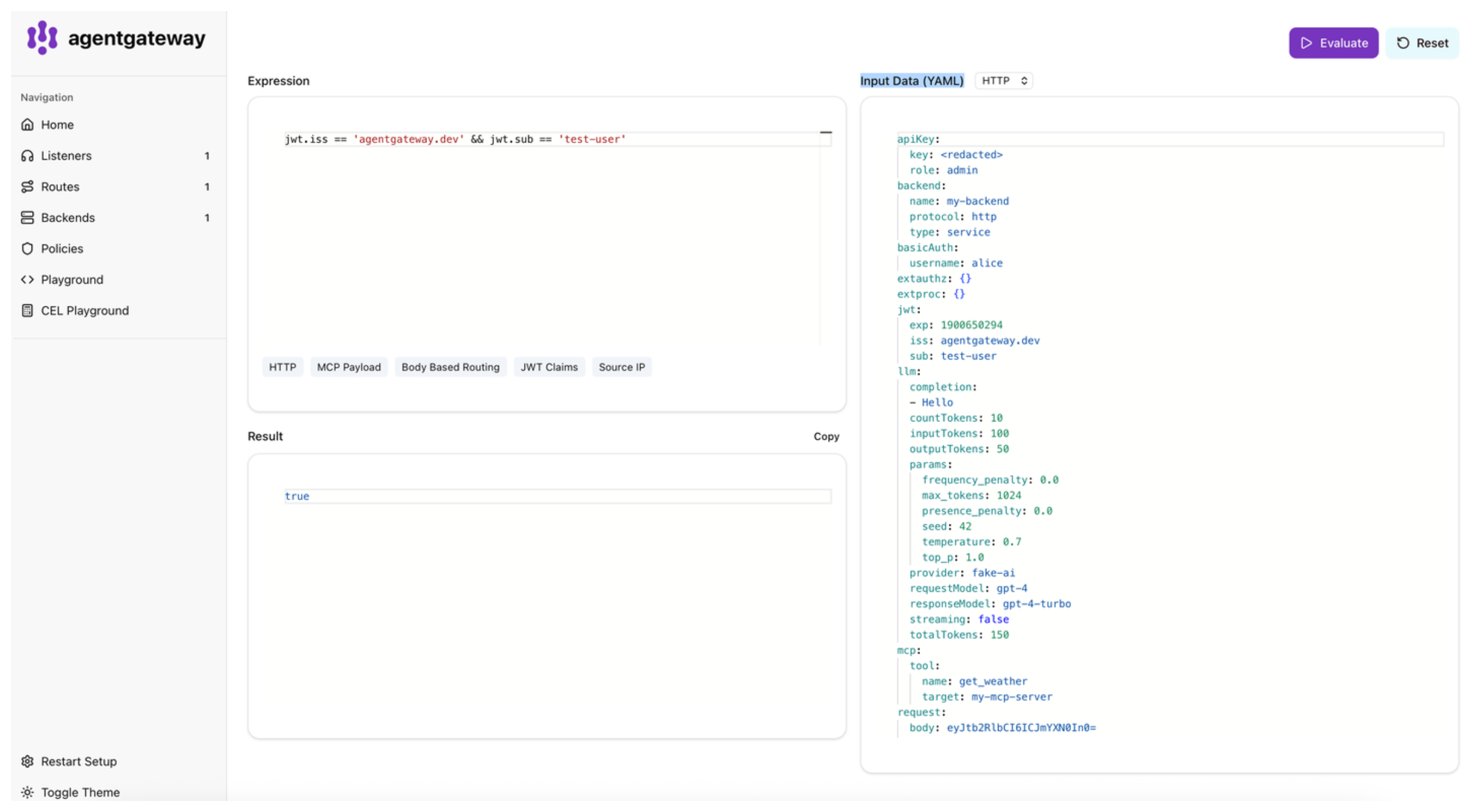

Built-in CEL playground

The agentgateway admin UI now includes a built-in CEL playground for testing CEL expressions against agentgateway's CEL runtime. The playground supports the custom functions and variables specific to agentgateway that are not available in generic CEL environments. To open it, select CEL Playground in the admin UI sidebar.

For more information, see CEL playground.

LLM request transformations

You can now use CEL expressions to dynamically compute and set fields in LLM requests. This allows you to enforce policies, such as capping token usage, without changing client code.

The following example caps max_tokens to 10 for all requests to the openai HTTPRoute:

:::yaml

apiVersion: agentgateway.dev/v1alpha1

kind: AgentgatewayPolicy

metadata:

name: cap-max-tokens

spec:

targetRefs:

- group: gateway.networking.k8s.io

kind: HTTPRoute

name: openai

backend:

ai:

transformations:

- field: max_tokens

expression: "min(llmRequest.max_tokens, 10)"

Full Changelog

- chore(ui/deps): bump @a2a-js/sdk and @modelcontextprotocol/sdk by @markuskobler in https://github.com/agentgateway/agentgateway/pull/919

- xds: handle hostname updates in route by @howardjohn in https://github.com/agentgateway/agentgateway/pull/924

- Bring in controller from kgateway by @howardjohn in https://github.com/agentgateway/agentgateway/pull/927

- Bump strands example dependencies by @howardjohn in https://github.com/agentgateway/agentgateway/pull/929

- local: fix non-unique listener key by @howardjohn in https://github.com/agentgateway/agentgateway/pull/922

- cargo: bump dependencies by @howardjohn in https://github.com/agentgateway/agentgateway/pull/930

- fix: respect enableIpv6 config for XDS route socket bindings (#3) by @AyRickk in https://github.com/agentgateway/agentgateway/pull/928

- controller: inference pool status v2 by @howardjohn in https://github.com/agentgateway/agentgateway/pull/932

- Add PreRouting phase support for authentication policies by @Copilot in https://github.com/agentgateway/agentgateway/pull/934

- Makefile fixes in controller by @npolshakova in https://github.com/agentgateway/agentgateway/pull/931

- apikey: use deterministic ordering by @howardjohn in https://github.com/agentgateway/agentgateway/pull/936

- Add more provider guardrail support by @npolshakova in https://github.com/agentgateway/agentgateway/pull/918

- Cherrypick HPA/PDB RBAC fix by @howardjohn in https://github.com/agentgateway/agentgateway/pull/939

- feat(compression): add streaming decompression for SSE responses by @apexlnc in https://github.com/agentgateway/agentgateway/pull/873

- Fix OpenAPI tool response by populating 'content' with serialized 'structuredContent' by @emilyszabo27 in https://github.com/agentgateway/agentgateway/pull/913

- compresion: tune pre-allocation amount by @howardjohn in https://github.com/agentgateway/agentgateway/pull/940

- body: keep size hints when we peek by @howardjohn in https://github.com/agentgateway/agentgateway/pull/941

- feat(llm): add embeddings support for Bedrock and Vertex by @apexlnc in https://github.com/agentgateway/agentgateway/pull/912

- ci: add cache debug workflow by @howardjohn in https://github.com/agentgateway/agentgateway/pull/945

- krt: fix ancestor by @howardjohn in https://github.com/agentgateway/agentgateway/pull/946

- Run e2e tests in CI by @howardjohn in https://github.com/agentgateway/agentgateway/pull/938

- Remove deadcode from e2e tests by @howardjohn in https://github.com/agentgateway/agentgateway/pull/948

- Fix debug cache workflow by @howardjohn in https://github.com/agentgateway/agentgateway/pull/949

- ci: debug e2e tests by @howardjohn in https://github.com/agentgateway/agentgateway/pull/947

- Docs: use URL for schemas by @howardjohn in https://github.com/agentgateway/agentgateway/pull/954

- local config: add golden unit tests by @howardjohn in https://github.com/agentgateway/agentgateway/pull/951

- Fix: CORS policy should run before rate limiting by @corinapurcarea in https://github.com/agentgateway/agentgateway/pull/926

- Align

go.modgo version with recommendations by @howardjohn in https://github.com/agentgateway/agentgateway/pull/956 - ci: pin versions of languages we use by @howardjohn in https://github.com/agentgateway/agentgateway/pull/955

- Bump go.mod, notably GIE to v1.3 by @howardjohn in https://github.com/agentgateway/agentgateway/pull/953

- [docs] yaml schema to config examples by @artberger in https://github.com/agentgateway/agentgateway/pull/965

- cel: add a few more string functions by @howardjohn in https://github.com/agentgateway/agentgateway/pull/958

- Control plane: guardrail policies by @npolshakova in https://github.com/agentgateway/agentgateway/pull/952

- fix: AgentgatewayParameters merge(GatewayClass, Gateway) bug by @chandler-solo in https://github.com/agentgateway/agentgateway/pull/969

- fix(llm): improve vertex/anthropic model handling by @markuskobler in https://github.com/agentgateway/agentgateway/pull/959

- refactor: helm chart cleanup to avoid merge calls that do not merge by @chandler-solo in https://github.com/agentgateway/agentgateway/pull/974

- fix(ratelimit): skip remote rate-limit call when descriptor evaluation fails by @corinapurcarea in https://github.com/agentgateway/agentgateway/pull/920

- llm: add reasoning_tokens, cached_input_tokens, cache_creation_input_tokens by @howardjohn in https://github.com/agentgateway/agentgateway/pull/957

- Add more kgateway pieces by @npolshakova in https://github.com/agentgateway/agentgateway/pull/968

- deps: move off git dependency for GCP auth by @howardjohn in https://github.com/agentgateway/agentgateway/pull/984

- refactor: split out MCP authentication into its own file by @howardjohn in https://github.com/agentgateway/agentgateway/pull/982

- test(deployer): add cookbook recipe helm overlay tests by @chandler-solo in https://github.com/agentgateway/agentgateway/pull/976

- mcp: be leniant on 204 vs 202 by @howardjohn in https://github.com/agentgateway/agentgateway/pull/986

- fix(llm): enhance error handling and response parsing by @den-vasyliev in https://github.com/agentgateway/agentgateway/pull/977

- Fix accessing JWT in log with MCP Authentication by @howardjohn in https://github.com/agentgateway/agentgateway/pull/975

- cel: drop duplicate functions by @howardjohn in https://github.com/agentgateway/agentgateway/pull/991

- fix: fix gcloud auth regression and bump rmcp by @howardjohn in https://github.com/agentgateway/agentgateway/pull/992

- dev: tilt by @yuval-k in https://github.com/agentgateway/agentgateway/pull/995

- mcp: box large stacks to avoid stack overflows by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1000

- deps: bump to rmcp 0.16 by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1002

- mcp auth: default to strict by @howardjohn in https://github.com/agentgateway/agentgateway/pull/997

- deps: fix gcloud deps again by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1003

- Link fixes by @kristin-kronstain-brown in https://github.com/agentgateway/agentgateway/pull/1005

- mcp: fix regression in error handling by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1004

- use references in json schema by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1008

- Removes envoy-related code by @chandler-solo in https://github.com/agentgateway/agentgateway/pull/989

- really really fix gcloud auth by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1012

- controller: if there are no listeners, deploy a dummy port by @howardjohn in https://github.com/agentgateway/agentgateway/pull/988

- fix(ui): resolve A2A policies onto routes in XDS/Kubernetes mode by @syn-zhu in https://github.com/agentgateway/agentgateway/pull/979

- feat(llm): adaptive thinking and structured outputs for claude providers by @apexlnc in https://github.com/agentgateway/agentgateway/pull/973

- temporarily drop GIE conformance by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1015

- Add Agentgateway Azure Backend Auth by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1016

- local config: add simplified LLM configuration by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1007

- cel: expose better compilation error messages by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1013

- llm: poll logs instead of fetching once by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1017

- Adds Syntax Directive to Dockerfile by @danehans in https://github.com/agentgateway/agentgateway/pull/904

- cel: Err resilient OR & AND operators by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1021

- Move CORS evaluation before authentication and authorization by @corinapurcarea in https://github.com/agentgateway/agentgateway/pull/1020

- update inference go mod to re-enable conformance tests by @filintod in https://github.com/agentgateway/agentgateway/pull/1024

- hyper-util: simplify create removing parts we don't need by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1025

- fix: exclude Map from literal expression optimization by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1027

- krt: fix debug endpoint with JWKS by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1032

- crd gen: add some additional markers by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1019

- deps: run go mod tidy by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1035

- kgateway: expose krt debugger by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1034

- add go mod tidy check by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1036

- fix(ui): upgrade npm packages by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1039

- add new 'oneshot' command for testing, etc by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1037

- mcp: update policies on each request instead of on each session by @howardjohn in https://github.com/agentgateway/agentgateway/pull/896

- e2e: do not always pull website fetcher by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1046

- cel: fix logging request breaking passthrough auth by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1049

- gw controller: call controllerExtension.register by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1050

- cel: fix checking types without materializing by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1044

- fix: builds on macos by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1056

- llm: always rate limit on streaming mode by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1047

- llm: add new transformation policy for LLM request by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1041

- Fix nested CEL expressions in RateLimit descriptor value by @ymesika in https://github.com/agentgateway/agentgateway/pull/1054

- cel: more materialize fixes by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1057

- Feat(jwt): Add requiredClaims option for the authentication policy by @corinapurcarea in https://github.com/agentgateway/agentgateway/pull/897

- Feat/Add failure mode for global rate limiting by @corinapurcarea in https://github.com/agentgateway/agentgateway/pull/935

- llm: allow overriding port by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1062

- Push charts as part of release. by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1061

- fix: Overlays can delete map elements with 'null' by @chandler-solo in https://github.com/agentgateway/agentgateway/pull/1065

- upgrade github actions and standardize on explicit latest os releases by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1052

- mcp: use stateless sessions when upstream doesn't use sessions by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1059

- fix deny-only MCP authorization policies incorrectly deny all resources by @iplay88keys in https://github.com/agentgateway/agentgateway/pull/1058

- Add support to fetch openapi schema using url by @twdsilva in https://github.com/agentgateway/agentgateway/pull/1060

- release: add more info to the release by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1067

- route: do not split up routes by match by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1063

- Fix windows name in release by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1069

- Fix version number in release by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1072

- Do not use a stale version in cargo by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1075

- release: properly tag images with 'v' prefix by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1074

- Revert "fix: Overlays can delete map elements with 'null'" by @chandler-solo in https://github.com/agentgateway/agentgateway/pull/1079

- client: do not swallow logs from Body by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1076

- Copy tcp routes when inserting listener by @i11 in https://github.com/agentgateway/agentgateway/pull/1085

- Provide Istio mesh config to XDS builder by @ymesika in https://github.com/agentgateway/agentgateway/pull/1086

- Better support for policies when connecting to policy backends by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1091

- Improvements to tracing and logging config by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1093

- Fix overlapping keys with local LLM mode by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1097

- Gateway API v1.5.0 by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1068

- feat(llm): accept /v1/messages InputFormat for chat/completions backends by @apexlnc in https://github.com/agentgateway/agentgateway/pull/909

- Remove dead functions in tracing by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1099

- llm: re-organize golden files by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1106

- release: do not put both versions in the notes, and use v prefix consistently by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1101

- Implement OTel support for access logs by @krisztianfekete in https://github.com/agentgateway/agentgateway/pull/1080

- Fix haiku streaming token count by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1108

- e2e: consolidate test helpers into a single unified deployment by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1098

- add missing event handler to deployer by @lgadban in https://github.com/agentgateway/agentgateway/pull/1114

- llm: further improve golden tests by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1118

- Fix e2e test setup on mac by @npolshakova in https://github.com/agentgateway/agentgateway/pull/1117

- Clean up kgateway-system names, add unit test by @npolshakova in https://github.com/agentgateway/agentgateway/pull/1120

- Bump istio dep, pulling in TLSRoute v1 by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1123

- llm: more tests by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1122

- Bump ui deps by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1124

- fix: update XListenerSet to ListenerSet by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1128

- Minimal cargo deps update by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1125

- Add missing enum to AGW policy by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1129

- release: finally get the v prefix properly? by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1133

- Add transformation

metadatasupport by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1130 - Fixed ref grant krt to handle multiple entries under .spec.to by @rudrakhp in https://github.com/agentgateway/agentgateway/pull/1141

- Prefix logs in setup kind script by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1132

- llm: fix setting LLM info for realtime by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1140

- Add aws backend by @christian-posta in https://github.com/agentgateway/agentgateway/pull/1126

- feat: remove ring & rustls-pemfile dependencies by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1145

- Add cel-based eviction policy for backends by @npolshakova in https://github.com/agentgateway/agentgateway/pull/1105

- controller: Tie Backend to specific Gateways by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1147

- Include invalid gateways in protocol selection by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1137

- Bump go version by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1154

- Use agentgateway name in api instead of kgw by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1158

- Bump async-openai by @npolshakova in https://github.com/agentgateway/agentgateway/pull/1149

- performance: micro-optimize some hot-paths by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1136

- Support both

agentgateway.dev/mcpandkgateway.dev/mcpas appProtocol values by @Copilot in https://github.com/agentgateway/agentgateway/pull/1168 - Add cache token usage to metrics by @Sodman in https://github.com/agentgateway/agentgateway/pull/1144

- fix: use host header port for DFP backend destination by @OS-kelvincampelo in https://github.com/agentgateway/agentgateway/pull/1142

- controller: refactor all tests to use consistent golden file pattern by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1169

- tests: use JSON for construction instead of non-ergonomic types by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1146

- Bump go and python deps by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1131

- Fix AgentgatewayPolicy CRD HostRewrite property by @naanselmo in https://github.com/agentgateway/agentgateway/pull/1167

- controller: reconcile per-gateway session keys by @apexlnc in https://github.com/agentgateway/agentgateway/pull/1160

- llm: add a new Detect mode for passthrough by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1051

- fix: prompt guard panics when builtin matches overlap by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1172

- Add more eviction config by @npolshakova in https://github.com/agentgateway/agentgateway/pull/1153

- Rust waypoint improvements by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1179

- fix(celx): use Unicode char indices in indexOf and lastIndexOf by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1180

- add filterKeys cel functions by @filintod in https://github.com/agentgateway/agentgateway/pull/1174

- fix(celx): correctly handle lastIndexOf by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1184

- Partial Revert "Gateway API v1.5.0 (#1068)" (accidental changes) by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1188

- crdgen: fix embedded types by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1190

- cel: accept padded or unpadded in base64.decode by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1181

- Add testing and refactoring for references by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1192

- crdgen: allow overriding an embedded fields rules by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1193

- Add support for backend tunnel (CONNECT) by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1191

- Drop more 'kgateway' names by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1198

- Bind Policies to specific Gateways by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1157

- chore: bump inference extension to v1.4.0-rc.2 by @danehans in https://github.com/agentgateway/agentgateway/pull/1201

- fix(telemetry): logged error when otlpEndpoint has connectivity issues by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1199

- fix(ui): npm updates by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1207

- docs: cleanup more kgateway references by @markuskobler in https://github.com/agentgateway/agentgateway/pull/1210

- fix: construct RFC 8414 metadata URL correctly for path-based issuers by @kimsehwan96 in https://github.com/agentgateway/agentgateway/pull/1206

- cel: use native Timestamp for startTime and endTime by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1186

- simple llm mode: add overrides and tokenize by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1209

- Add OTel access log config and fix initialization by @npolshakova in https://github.com/agentgateway/agentgateway/pull/1182

- fix select branch getting stuck by @filintod in https://github.com/agentgateway/agentgateway/pull/1203

- a2a: decouple support from a2a_sdk by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1211

- cel: add support for header modifier by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1171

- tilt: fix crds too big with helm_resource by @yuval-k in https://github.com/agentgateway/agentgateway/pull/1217

- refactor: break out listenerset build to be a standard function by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1214

- make EINVAL not fatal on mac by @filintod in https://github.com/agentgateway/agentgateway/pull/1216

- garbage collection for unneeded pdb/hpa/vpa by @jordanbecketmoore in https://github.com/agentgateway/agentgateway/pull/1183

- fix: honor InferencePool appProtocol in translation by @danehans in https://github.com/agentgateway/agentgateway/pull/1219

- fix oauth bug for anthropic subscription by @filintod in https://github.com/agentgateway/agentgateway/pull/1220

- misc cleanup on hyper-util fork by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1224

- golden tests: dump full policy by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1226

- Fix transitive dependencies from Gateway <---> Backend by @howardjohn in https://github.com/agentgateway/agentgateway/pull/1227

New Contributors

- @AyRickk made their first contribution in https://github.com/agentgateway/agentgateway/pull/928

- @emilyszabo27 made their first contribution in https://github.com/agentgateway/agentgateway/pull/913

- @corinapurcarea made their first contribution in https://github.com/agentgateway/agentgateway/pull/926

- @chandler-solo made their first contribution in https://github.com/agentgateway/agentgateway/pull/969

- @den-vasyliev made their first contribution in https://github.com/agentgateway/agentgateway/pull/977

- @kristin-kronstain-brown made their first contribution in https://github.com/agentgateway/agentgateway/pull/1005

- @syn-zhu made their first contribution in https://github.com/agentgateway/agentgateway/pull/979

- @filintod made their first contribution in https://github.com/agentgateway/agentgateway/pull/1024

- @iplay88keys made their first contribution in https://github.com/agentgateway/agentgateway/pull/1058

- @twdsilva made their first contribution in https://github.com/agentgateway/agentgateway/pull/1060

- @lgadban made their first contribution in https://github.com/agentgateway/agentgateway/pull/1114

- @rudrakhp made their first contribution in https://github.com/agentgateway/agentgateway/pull/1141

- @Sodman made their first contribution in https://github.com/agentgateway/agentgateway/pull/1144

- @OS-kelvincampelo made their first contribution in https://github.com/agentgateway/agentgateway/pull/1142

- @naanselmo made their first contribution in https://github.com/agentgateway/agentgateway/pull/1167

- @kimsehwan96 made their first contribution in https://github.com/agentgateway/agentgateway/pull/1206

- @jordanbecketmoore made their first contribution in https://github.com/agentgateway/agentgateway/pull/1183

Full Changelog: https://github.com/agentgateway/agentgateway/compare/v0.12.0...v1.0.0